[Note: This is a guest post from Natia Mestvirishvili, a Researcher at International Centre for Migration Policy Development (ICMPD) and former Senior Researcher at CRRC-Georgia. This post was co-published with the Clarion.]

Intense public debate usually accompanies the publication of survey findings in Georgia, especially when the findings are about politics. The discussions are often extremely critical or even call for the rejection of the results.

Normally criticism of surveys would focus on the shortcomings of the research process and help guide researchers towards better practices to make surveys a better tool to understand society. In Georgia most of the current criticism of surveys is, unfortunately, counterproductive and mainly driven by an unwillingness to accept the findings, because the critics do not like them. This blog post outlines some features of survey criticism in Georgia and highlights the need for constructive criticism aimed at the improvement of research practice, because constructive criticism is extremely important and useful for the development of the “culture of polling” in Georgia.

Often, discrepancies between the findings and the critics’ opinion about public opinion cause criticism of surveys in Georgia. Hence, the survey critics claim that the findings do not correspond to ‘reality’. Or rather, their reality.

But, are surveys meant to measure ‘reality’? For the most part, no. Rather, public opinion polls measure and report public opinion which is shaped not only by perceptions, but also by misperceptions i.e., the views and opinions that people have. There is no ‘right’ or ‘wrong’ opinion. It is equally important that these are opinions that people feel comfortable sharing during interviews –while talking to complete strangers. Consequently, and leaving aside deeply philosophical discussions about what reality is and whether it exists at all, public opinion surveys measure perceptions, not reality.

Among the many assumptions that may underlie criticism of surveys in Georgia, critics often suggest that:

They know best what people around them think;

What people around them think represents the opinions of the country’s entire population.

However, both of these assumptions are wrong, because, in fact:

-

- Although people in general believe that they know others well, they don’t. Extensive psychological research shows that there are common illusions which make us think we know and understand other people better than we actually do – even when it comes to our partners and close friends;

-

- Not only does everyone have a limited choice of opinions and points of view in their immediate surroundings compared to the ‘entire’ society, but it has also been shown that people are attracted to similarity. As a result, primary social groups are composed of people who are alike. Thus, people tend to be exposed to the opinions of their peers, people who think alike. There are many points of view in other social groups that a person may never come across, not to mention understand or hold;

-

- Even if a person has contacts with a wide diversity of people, these will never be enough to be representative of the entire society. Even if it were, individuals lack the ability to judge how opinions are distributed within a society.

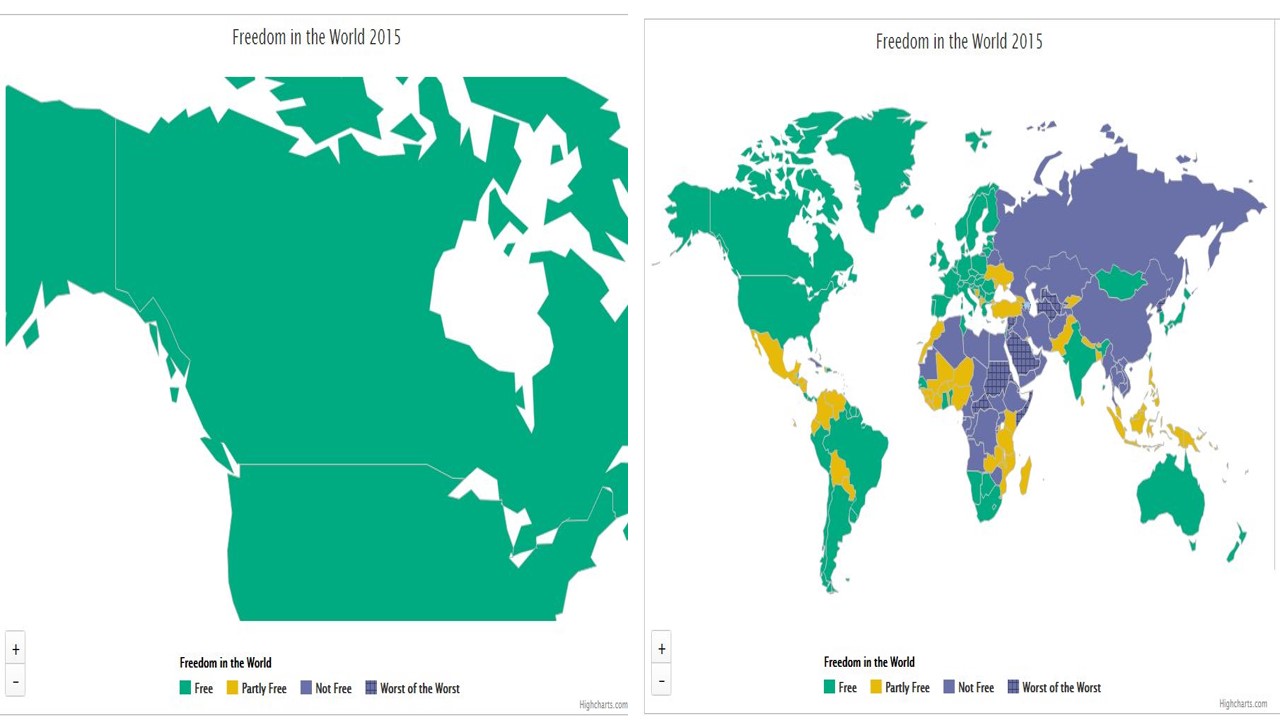

To make an analogy, assuming the opinions we hear around us can be generalized to the entire society is very similar to zooming in on a particularly large country, like Canada, on a map of a global freedom index, and assuming that since Canada is green, i.e. rated as “Free”, the same is true for the rest of the world. In fact, if we zoom out, we will be able to see that the whole world is all but green. Rather, it is very colorful, with most of the countries being of different colors than green, and “big” Canada is no indication of the state of the rest of the world.

Source: www.freedomhouse.org

People who think that what people around them think (or, to be even more precise – who think that what they think that people around them think) can be generalized to the whole country make a similar mistake.

Instead of objective and constructive criticism based on unbiased and informed opinions and professional knowledge, public opinion polls in Georgia are mostly discussed based on emotions and personal preferences. Professional expertise is almost entirely lacking in those discussions.

Politicians citing questions from the same survey in either a negative or positive context, depending on whether they like the results or not, is a good illustration of the above claim. For example, positive evaluations of a policy or development by the public is often proudly cited by political actors without doubting the quality of the survey. At the same time, low and/or decreasing public support for a particular party according to the findings of the same survey is “explained away” by the same actors as poor data quality. Subsequently, politicians may express their distrust in the research institution which has conducted the survey.

In Georgia and elsewhere, survey criticism should be focused on the process of research and should be aimed at its improvement rather than the rejection of the role and importance of polling. It is the duty of journalists, researchers and policymakers to foster healthy public debate on survey research. Instead of emotional messages aimed at demolishing trust in public opinion polls and pollsters in general, rationally and carefully discussing the research process and its limitations, research findings and their meaning/significance and, where possible, pointing to possible improvements of survey practice is needed.

Criticism focused on “unclear” or “incorrect” methodology should be further elaborated by professionally specifying the aspects that are unclear or problematic. Research organizations in Georgia will highly appreciate criticism that asks specific questions aimed at improving the survey process. For example, does the sample design allow for the generalization of the survey results to the entire population? How were misleading questions avoided? How have the interviewers been trained and monitored to minimize bias and maximize the quality of the interviews?

This blog post argued that survey criticism in Georgia is often based on inaccurate assumptions and conveys messages that are not helpful for research organizations from the point of view of improving their practice. These messages are also often dangerous as they encourage uninformed skepticism towards survey research in general. Rather than these unhelpful messages, I call on actors to engage in constructive criticism which will contribute to the improvement of the quality of surveys in Georgia, which in turn will allow people’s voices to be brought to policymakers and their decisions to be informed by objective data.

The second part of this blog post, to be published on January 23, continues the topic, focusing on examples of misinterpretation and misuse of survey data in Georgia.